AI agents are confused deputies. That's a 38-year-old security pattern, and the answer is just as old: put a broker — a trusted, observable, deterministic, auditable, unforgeable, scope-narrowed, policy-driven, non-AI middle layer — between the agent and the SaaS APIs it needs to call, holding the credentials your agent shouldn't have.

In 1988, Norm Hardy published a short paper describing a real incident from his time at Tymshare. A Fortran compiler called FORT lived in a system directory named SYSX. To collect usage statistics, the compiler needed to write to (SYSX)STAT, so the operating system had granted FORT a "home files license," authorizing it to write any file inside SYSX. The system's billing file, (SYSX)BILL, also lived there. A user invoking the compiler could supply a filename to receive optional debug output. One day a user supplied (SYSX)BILL. The compiler asked the OS to open that file for writing; the OS, observing the home files license, allowed it. The billing data was overwritten. The compiler did exactly what it was designed to do. The flaw was architectural.

Hardy named it the confused deputy problem: a privileged program (the deputy) holds authority of its own; a less-privileged caller asks it to do something; the deputy gets confused about whose authority it's acting on, and uses its own. The fix is to take that authority off the deputy entirely and put it behind a separate layer that mediates the call on demand. That layer is the broker.

Thirty-eight years later, every AI agent your company runs is a confused deputy. And most of them are running without a broker.

Why AI agents make it worse

Hardy's FORT had one input channel: the command line. A modern AI agent has dozens: email bodies, retrieved web pages, uploaded PDFs, tool outputs from MCP servers, messages from peer agents in a multi-agent system. Anything that lands in the context window can issue instructions, and by design the agent treats them all as legitimate.

That breaks an assumption traditional access control depends on: the caller controls the input. In a web app, the caller (the authenticated session) and the input (the HTTP request body) come from the same place. For an agent, the caller is whoever shapes the prompt, which means an attacker who can write an email or seed a search result is also a caller.

In November 2025, security researchers at PromptArmor showed what that looks like in production. They hid malicious instructions in 1-pixel font on an integration guide. When a developer pointed Google's Antigravity IDE at it, the agent bypassed its own .gitignore-based file protection by shelling out to cat. Then it leaked the contents of .env files to an attacker-controlled webhook.site URL via Antigravity's own browser subagent. The user had set things up correctly. The sandbox held. The agent simply could not tell what the user asked for from what the input told it to do.

Same shape as Hardy's FORT, decades later: broad authority, untrusted input, no way to separate the two.

The community has answers. They're just scattered.

This is not a new problem. The security community has been writing answers for decades:

Capability-based security (Dennis & Van Horn, 1966; later the E language and Mark Miller's Caja work at Google). The principle: don't grant ambient authority based on identity or location; pass explicit, unforgeable capabilities for each resource the program is allowed to touch. A capability bundles what you can do with what you can do it to, and it can't be confused with anything else.

Brokered Credentials (codified by the OWASP LLM Top 10). Don't put API tokens in the LLM's context. A trusted middle layer makes the call on the agent's behalf; the model decides what to do, the broker handles how. Prompt injection that asks the model to print its credentials gets nothing useful. The credentials aren't there.

The Phantom Token Pattern (Curity, originally for OAuth in microservices). The agent holds an opaque session handle, not the real bearer token. A proxy validates the handle, swaps in the real credential at the network edge, and forwards upstream. If the agent leaks its environment, the attacker gets a string that expires when the session ends.

Just-in-time credential injection (workload identity systems like Aembit). Mint a short-lived, scope-narrowed credential per call instead of issuing long-lived tokens.

None of this is missing from the literature. It's missing from the defaults. Most agent platforms still hand the model an OAuth token, with no broker in between, and hope for the best.

How Zero's broker works

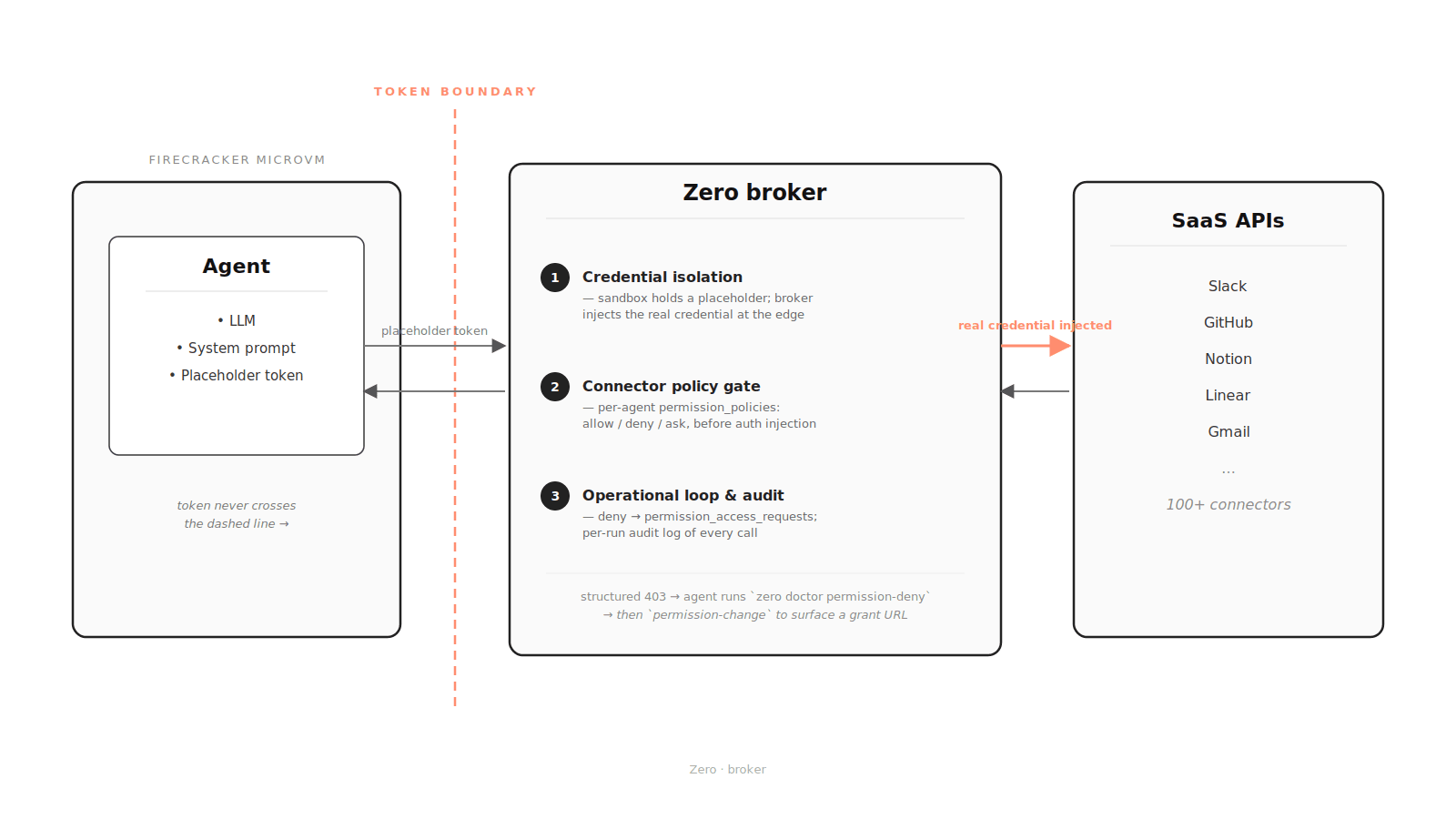

We didn't invent any of the patterns above. We wired them into a single broker that runs by default for every agent on Zero. It's the trusted middle layer those patterns describe, built for an AI agent platform. Every call an agent makes to a connector goes through it. Each connector is one external SaaS (Slack, GitHub, Notion, and so on).

The broker sits between every agent and every connector. Real credentials live only on the broker side of the dashed line.

The broker sits between every agent and every connector. Real credentials live only on the broker side of the dashed line.

Think of it as three layers.

1. Credential isolation

The agent's sandbox never holds a real connector credential. When you connect a SaaS to Zero, the OAuth token or API key lives on the broker side. The sandbox gets a placeholder string that looks enough like an environment secret for existing tools to keep working, but no upstream SaaS would accept it.

When the agent makes a request to a registered connector host, the broker matches the request, resolves the connector's auth template, and injects the real credential at the network edge. The request goes upstream with valid auth; the agent never held anything useful. A prompt-injected agent that dumps its environment variables hands the attacker a placeholder, not the SaaS token.

This is the phantom token pattern, applied to AI agents.

2. Connector policy gate

A connector in Zero should be more than an on/off switch. Each connector describes the API bases it covers and how auth should be injected. Where the upstream service publishes a stable scope-to-endpoint mapping, it can also describe which named permission covers each method and path. Slack's slack-api-ref is a good example.

So when an agent connected to Slack calls chat.postMessage, the broker can map that request to chat:write. When it reads audit logs, that's admin.analytics:read. For each agent, permission_policies defines how those named permissions behave: allow, deny, or ask. The policy is enforced at the broker before auth injection, not as a hint to the model. If the agent tries to make a call covered by a denied permission, perhaps because it was prompt-injected, the call never reaches the upstream network.

Not every connector gets that resolution today. Some upstream APIs don't publish a stable scope-to-endpoint mapping. GitHub's GraphQL surface is the canonical case: the REST side can be mapped, but the GraphQL side cannot yet. For those connectors, the broker still controls credential injection and the network path, while the permission gate falls back to the coarser connector or host-level policy the platform can actually enforce. We fill these in as upstream data becomes available. We don't claim coverage we haven't built.

Tokens don't carry ambient authority. Authority is brokered per agent, at whatever resolution upstream supports. That's the capability-based half of the answer.

3. Operational loop and audit

Least privilege only works if the failure mode is usable. Agents grow. Six weeks in, a research agent that started by reading from Notion might need to write a summary back. The common failure modes elsewhere: the agent silently runs without the new permission and breaks, or the operator over-grants in a panic and never claws it back.

A denied connector request returns a structured 403: connector, method, path, base URL, and the matching permission names when the broker can identify them. The agent's system prompt tells it how to diagnose the denial and how to ask for exactly the permission it just hit, producing a one-click grant URL for the user or admin. That keeps the escalation path tied to the exact permission being requested instead of turning into "just grant everything."

Permission change requests sit in a queue. Owners and admins can approve or reject them from the dashboard; approved requests update the agent's policy, and the next retry goes through. Most platforms skip this loop. Without it, "least privilege" stays on the slide deck instead of running in production.

The same broker path feeds audit. Per-run network logs record sandbox network activity across HTTP, TCP, DNS, and lower-level packet observations for non-TCP traffic. Connector-matched requests include structured broker metadata: connector, matched permission when available, allow/deny outcome, auth resolution metadata, and billable flag. If someone later asks "what did this agent do on Tuesday at 3pm?", you reconstruct the answer from those records. Prevention misses things. The audit trail is how you find out what.

What we haven't solved

Even with everything above, an agent with a legitimate chat:write can still be talked into posting an embarrassing message to a channel it already has access to. The broker narrows the blast radius; it does not eliminate it.

The other half of the answer is high-stakes-action approval, output validation, and treating tool returns as untrusted by default. That work is on the roadmap, not in the product yet. Anyone who claims they've solved confused deputy end-to-end is selling you something.

This should be the floor, not a feature

Capability-based security has been around since the 1970s. Brokered credentials are in the OWASP LLM Top 10. Phantom tokens predate the LLM era. None of this is missing because nobody knew the answer. It's missing because early agent platforms optimized for "make the demo work" and pushed security to a later release, which often never came.

The next generation of platforms should aim higher. Tokens shouldn't enter the model context. Authority should be enumerated per agent. Privilege escalation should go through a human. Every action should be auditable end to end. None of that is novel. All of it should be the baseline.

When you're picking an agent platform, "is it secure?" gets you nowhere. Every vendor says yes. A better question: show me your broker, and walk me through what happens when an agent asks for a scope it doesn't have.

The broker sits in front of every agent on Zero by default. Connector inventory, scope mappings, permission policies, and broker logic all live in Zero's source repository. Found a connector we haven't covered? File an issue.

References

Foundational

- Norm Hardy, The Confused Deputy: (or why capabilities might have been invented), ACM SIGOPS Operating Systems Review 22(4), 1988. DOI 10.1145/54289.871709

- Dennis & Van Horn, Programming Semantics for Multiprogrammed Computations, CACM 1966 (the founding capability-systems paper)

- Mark Miller et al., Caja: capability-based security for the web, Google

- OWASP LLM Top 10

- Curity, Phantom Token Pattern

Real-world incidents cited

- PromptArmor, Google Antigravity Exfiltrates Data, November 2025

- Simon Willison, coverage and analysis, November 25, 2025